AI Agents for Enterprise: Secure Custom Development & Platform Guide for 2026

Choose the best enterprise AI agent platform or build secure custom multi-agent systems. A 2026 guide for CTOs on architecture, compliance, orchestration, and secure AI integration.

Table of Contents

Enterprise AI has entered a new phase. What began as experimentation with chatbots and internal copilots is now evolving into something far more strategic: AI agents embedded directly into core business workflows. For organizations with 500+ employees operating in regulated industries, this shift is not about novelty — it is about operational leverage, compliance resilience, and long-term competitiveness.

At the same time, this sphere has become overwhelmingly complex.

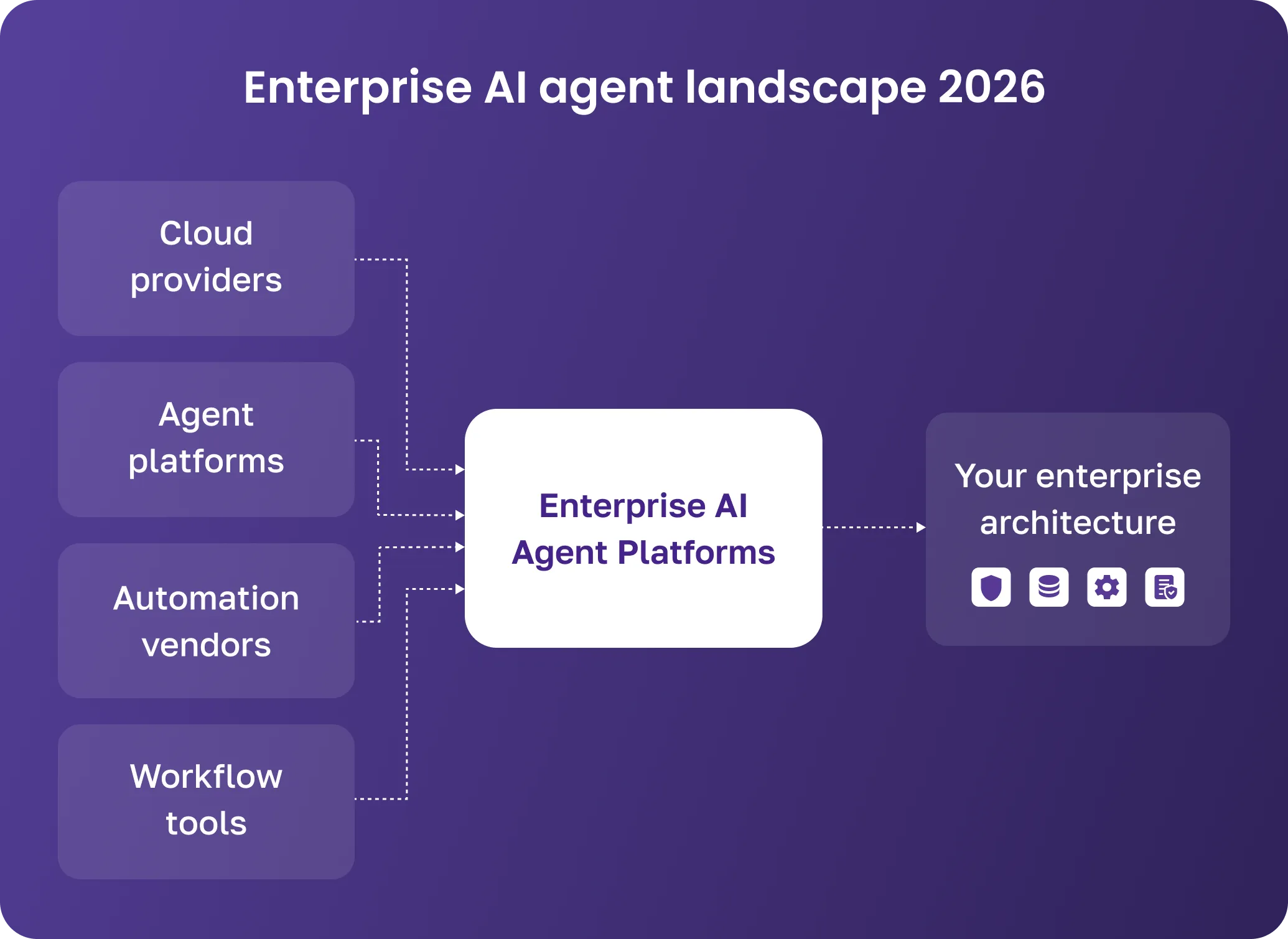

The market is flooded with enterprise AI agent platforms. Cloud providers, AI startups, automation vendors, and workflow companies all promise intelligent agents capable of transforming operations. Demos look impressive. Marketing language sounds convincing. Yet when enterprise leaders begin a serious evaluation, critical questions quickly surface:

- Which platforms are actually enterprise-ready?

- How do we compare vendors objectively?

- Can these solutions meet our security and compliance standards?

- What happens when we need deeper customization?

- How do we orchestrate multiple agents across complex workflows?

- Do we have the internal expertise to design and maintain such systems?

What makes this evaluation particularly difficult is that most platforms are optimized for demonstration rather than production complexity. They showcase conversational fluency, but rarely expose architectural depth: how access control is enforced at the field level, how multi-agent workflows are versioned, how reasoning traces are stored for audit, or how escalation paths are encoded into system logic. For enterprises operating in regulated sectors, these architectural nuances are far more important than interface design or out-of-the-box templates.

For CTOs, VPs of Engineering, IT Directors, Heads of Operations, and Product Owners, the challenge is not whether AI agents are useful. The challenge is how to implement them correctly — securely, scalably, and in alignment with existing architecture.

This guide is written specifically for enterprise decision-makers in finance, insurance, healthcare, retail, and the public sector — industries where compliance, governance, and data protection are non-negotiable.

In this long-form guide, you will learn:

- What enterprise AI agents actually are (and how they differ from generic AI tools)

- How to evaluate enterprise AI agent platforms

- When to choose off-the-shelf solutions and when to invest in custom enterprise AI agent development

- How to design secure AI architectures that pass regulatory scrutiny

- How multi-agent orchestration works in complex business processes

- What a real-world implementation roadmap looks like

By the end, you will have a structured framework for making informed architectural decisions for 2026 and beyond.

What Are Enterprise AI Agents?

The term “AI agent” is everywhere, but in enterprise architecture, it needs a precise meaning. In this guide, an enterprise AI agent is not just a chatbot that answers questions. It is a production system that combines reasoning, structured data access, permissions, workflow integration, and the ability to take actions inside your business environment.

Technically, an enterprise agent usually brings together one or more AI models, a retrieval layer for internal data, integrations with systems such as CRM, ERP, claims, or ticketing platforms, explicit decision logic, and an orchestration layer that coordinates tasks. Logging, monitoring, and human-in-the-loop controls sit around this core so the system remains observable and controllable in production.

| Criteria | Generic Chatbot | Enterprise AI Agent |

|---|---|---|

| Data | Public/broad web data | Internal, permissioned data sources (CRM, ERP, claims, tickets) |

| Actions | Answers questions | Takes actions in systems (update records, create tickets, trigger workflows) |

| Managing | Minimal logging | Full audit trail, role-based access, policy constraints |

| Inegration | Sits on top of tools | Embedded into workflows and infrastructure |

When designed this way, enterprise AI agents stop being “smart UIs” and become part of the digital infrastructure. They sit alongside APIs, microservices, data warehouses, and workflow engines, interacting with them in structured, auditable ways. AI is treated as a governed component that must meet the same reliability and security standards as any other production service.

Most organizations started with assistive AI: copilots that draft emails, summarize documents, or answer internal questions. These tools improve productivity but rarely execute business actions themselves. The next stage is action‑oriented AI, where agents interpret inputs, retrieve context, generate structured decisions, trigger downstream processes, and escalate exceptions to humans when needed.

In an insurance workflow, for example, an agent might parse a claim, pull policy data, check coverage, flag inconsistencies, draft documentation, and route the case for approval. In that model, the agent is part of the workflow rather than a separate helper sitting on top of it.

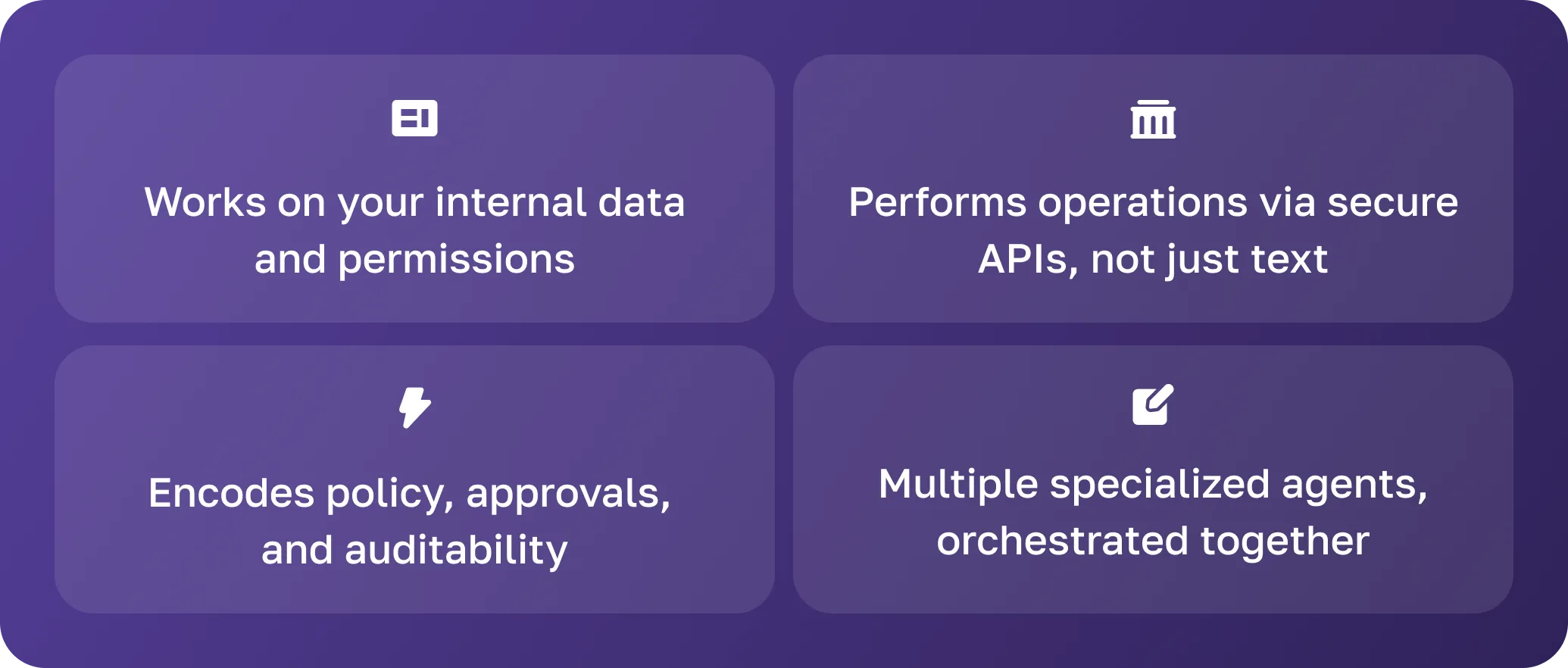

Enterprise AI agents differ from consumer tools by a few core characteristics. They are context‑aware: they operate on approved internal data, follow your taxonomies and schemas, and respect document‑level permissions and workflow state, rather than roaming the open internet. They are action‑oriented: they update records, create tickets, initiate payments, or trigger checks through secure API integrations, validation layers, and transaction logging, not just text generation.

They are also governed. Policy enforcement, role‑based access, approval flows, and regulatory constraints are encoded into the agent’s allowed actions. Every meaningful step is logged and observable so that you can replay decisions, trace which model and version were used, and satisfy audit and compliance requirements.

Finally, enterprise agents are usually built to be composable rather than monolithic. Instead of a single “super‑agent,” you get a set of planners, domain, validation, and risk agents coordinated by an orchestrator and overseen by humans where necessary. This multi‑agent approach makes systems easier to reason about, scale, and maintain over time.

Enterprise AI Agent Platforms — Overview & Comparison

The rapid growth in enterprise AI demand has created a crowded marketplace. Major cloud vendors provide agent frameworks. Startups offer agent orchestration platforms. Workflow companies integrate AI layers into automation tools.

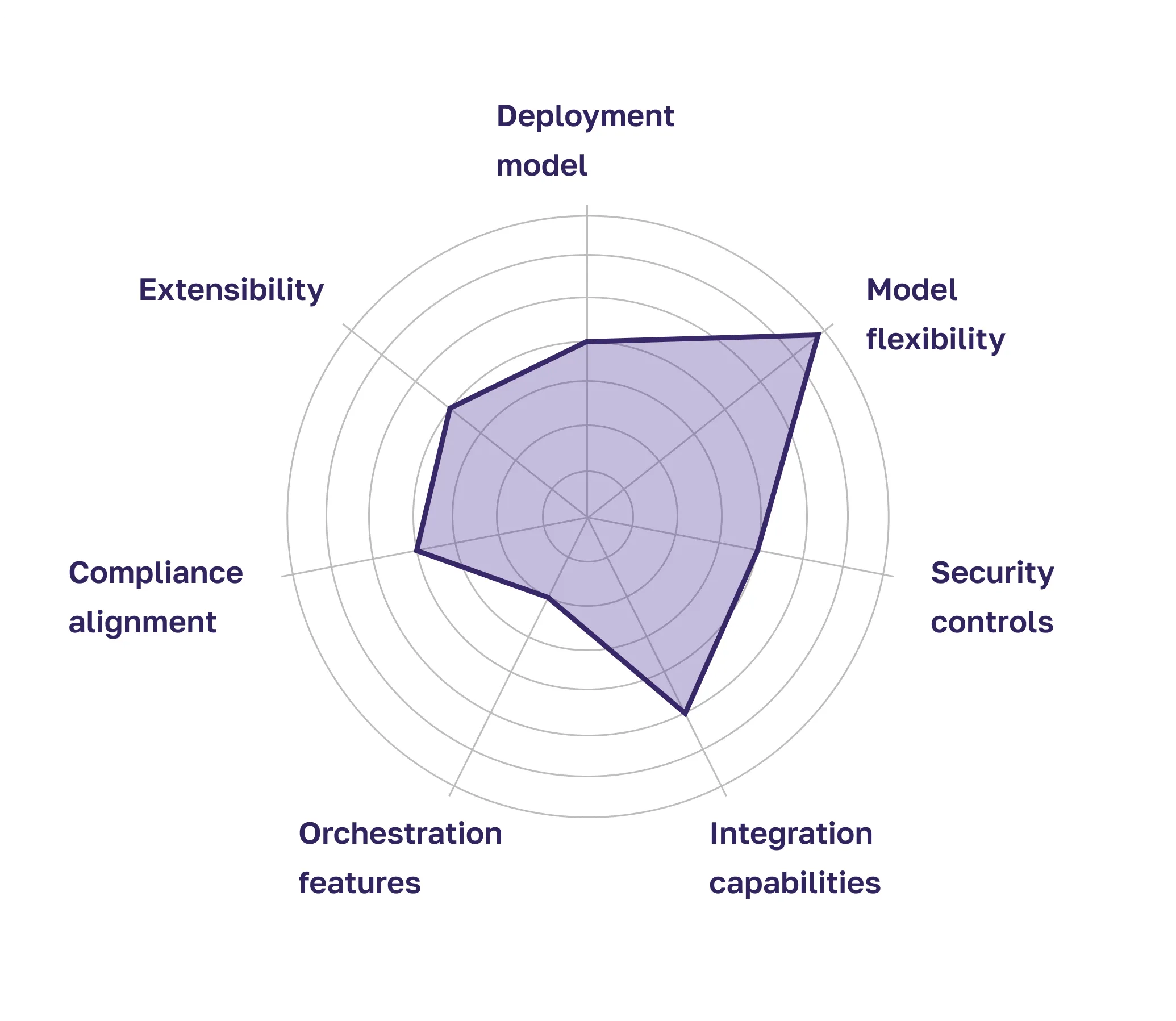

For enterprise leaders, the question is not which platform is most innovative. The question is which solution aligns with:

- Security requirements

- Data residency constraints

- Compliance mandates

- Existing IT infrastructure

- Internal engineering capabilities

- Long-term scalability goals

Evaluating platforms requires structured criteria.

Understanding these dimensions prevents evaluation from being driven solely by demo impressions.

Off-the-Shelf Platforms vs Custom Enterprise AI Agent Development

Off-the-shelf enterprise AI agent platforms provide speed. They often include:

- Pre-built agent templates

- Visual configuration interfaces

- Hosted model infrastructure

- Basic monitoring dashboards

- Built-in connectors to common SaaS tools

For low-risk, internal productivity scenarios, these solutions may be entirely sufficient.

However, large enterprises frequently encounter limitations:

- Inability to deeply integrate with proprietary systems

- Insufficient granularity in access controls

- Restricted orchestration flexibility

- Vendor dependency for roadmap features

- Constraints in deploying in regulated environments

Custom enterprise AI agent development, in contrast, prioritizes architectural control.

With custom development:

- Deployment can occur in a private cloud or on-prem environments

- Multi-model strategies can be implemented

- Fine-grained RBAC policies can be enforced

- Complex orchestration logic can be designed

- Domain-specific validation layers can be embedded

- Compliance controls can be encoded into the architecture

The trade-off is initial design complexity. But for mission-critical workflows, this investment reduces long-term operational risk.

| Criteria | Off-the-Shelf Platform | Custom Development Partner |

|---|---|---|

| Deployment flexibility | Typically SaaS-focused | Cloud, hybrid, or on-prem |

| Security & compliance | Standard configurations | Built around your regulatory environment |

| Orchestration complexity | Basic | Fully customizable multi-agent systems |

| Integration depth | Pre-built connectors | Deep integration with proprietary systems |

| Ownership & roadmap control | Platform-controlled | Enterprise-controlled |

For organizations with high compliance requirements and complex processes, custom development often becomes a strategic necessity rather than an optional enhancement.

This is especially true when AI agents are expected to handle cross-departmental workflows — for example, underwriting decisions that simultaneously impact finance, risk, compliance, and customer service. In such environments, architectural ownership becomes critical. Enterprises must be able to define how decisions are decomposed, how exceptions are escalated, how data flows between systems, and how future regulatory updates are incorporated into the agent’s logic. Off-the-shelf flexibility often ends where true enterprise complexity begins.

While many vendors position themselves as AI platforms, few take ownership of architectural outcomes. Jetruby operates differently. We are platform-agnostic but architecture-led. Whether leveraging existing enterprise AI platforms or building fully custom multi-agent systems, our priority is to design solutions that seamlessly integrate with your IT ecosystem, security posture, and operational workflows. The platform is a tool — the architecture is the strategy.

Security, Governance & Vetting Enterprise AI Agents

Security is still the main reason enterprise AI projects stall or never reach production. As soon as agents start touching real workflows in finance, insurance, or healthcare, the questions are always the same: how to prevent data leakage, defend against prompt injection, enforce access control, and prove every material decision during an audit.

In practice, that means designing for data protection first. Permission-aware retrieval, masking, and tokenization of sensitive fields, encryption, and network segmentation become default requirements rather than nice-to-haves. Prompt‑injection defenses must also be built into the platform itself through hardened system prompts, input sanitization, context isolation, output validation, and policy‑based response filtering.

On top of that, enterprises need governance, explainability, and vendor accountability rather than just good demos. Every significant agent action should be reconstructable — including which data was accessed, which model and version were used, which tools were called, and exactly what was executed — via structured, immutable logging, versioning, monitoring, and replay capabilities.

Regulatory frameworks like GDPR, HIPAA, and financial audit standards then become architectural constraints from day one, shaping deployment choices and retention policies.

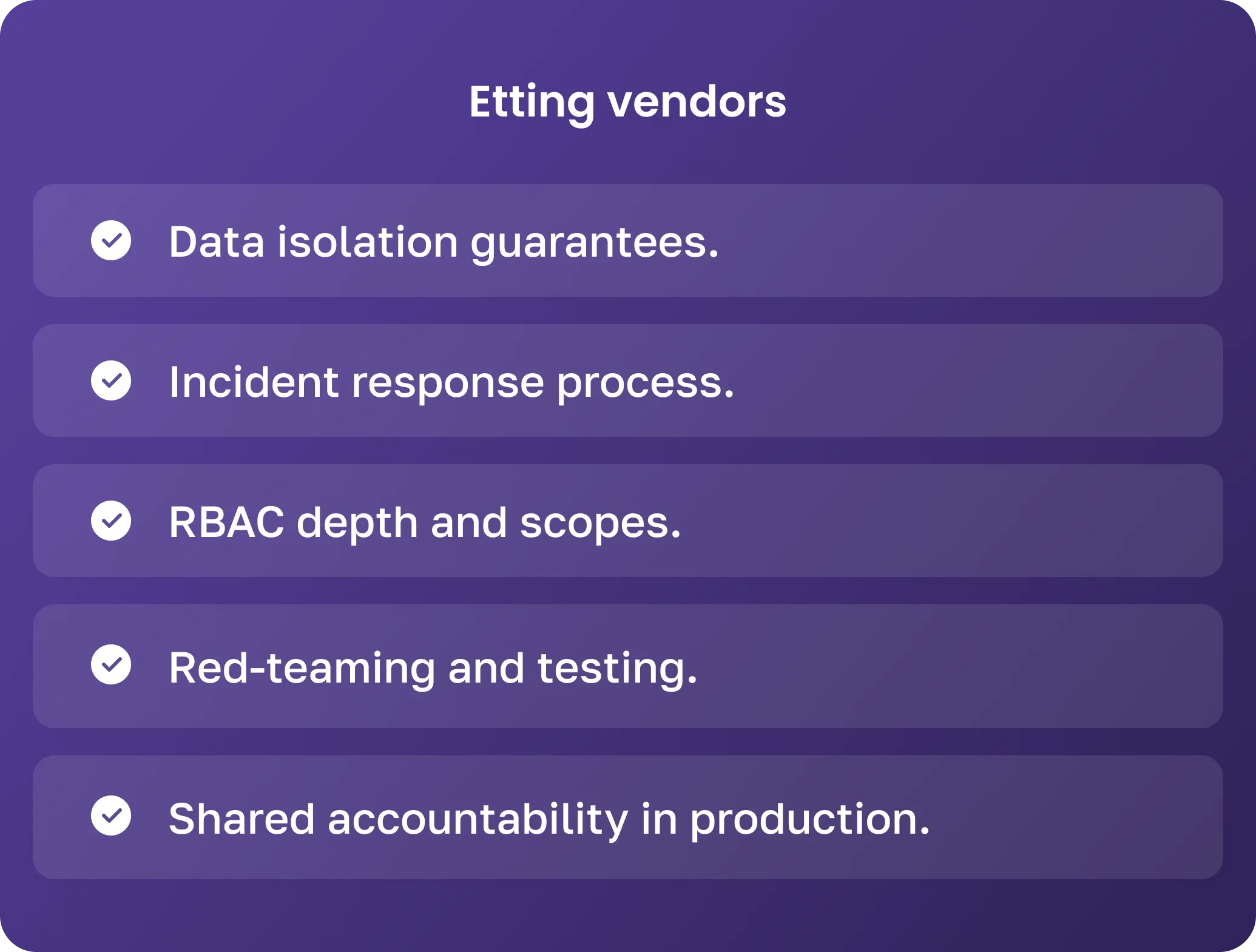

Vendor and platform vetting, in turn, shifts from a procurement task to a governance function. The evaluation focus shifts to data isolation guarantees, incident-response readiness, RBAC depth, red‑teaming practices, and a provider’s willingness to share responsibility for production behavior.

How We Design Secure Enterprise AI Agents

At Jetruby, the design of enterprise AI agents begins with a security-first mindset and an architecture-driven approach. We treat AI agents not as standalone tools, but as integral components of enterprise infrastructure — capable of executing business-critical tasks while fully respecting regulatory and organizational boundaries. Security and compliance are not afterthoughts; they are embedded into every layer of design and implementation.

The first step in our methodology is comprehensive risk mapping. This process involves multiple dimensions:

- Data Classification Tiers. We assess the sensitivity of every dataset the agent may access, from public knowledge repositories to highly confidential financial or healthcare records. This classification guides the design of access controls, encryption, and monitoring.

- Access Hierarchies. We map user roles and system privileges, ensuring agents can only perform actions appropriate to their defined responsibilities. For example, an AI agent that analyzes claims data may retrieve sensitive documents but cannot authorize payments without human oversight.

- Regulatory Obligations. Different industries impose distinct compliance requirements, such as GDPR for data privacy, HIPAA for health information, or SOC 2 for operational controls. We evaluate these obligations early and integrate them into the system design so that agents operate within these legal and regulatory frameworks.

- Threat Models. We identify potential attack vectors, including prompt injection, data exfiltration, unauthorized API calls, and adversarial input. This allows us to preemptively design countermeasures and protective layers around each agent’s workflow.

Once risks are mapped, we proceed to the architectural and technical design of secure AI agents:

- Isolated Model Hosting Environments. Models are deployed in controlled environments, often in private clouds or on-premises infrastructure. Network segmentation and encrypted communication channels ensure that models cannot access or leak sensitive data beyond their scope.

- Secure Retrieval Layers. Agents retrieve data through tightly controlled interfaces, with field-level masking and query validation. This ensures that sensitive information is never exposed accidentally.

- Policy-Aware Prompt Engineering. All instructions given to the AI are governed by business rules and compliance constraints. Prompts are engineered to prevent policy violations, reduce the risk of unintended outputs, and guide the agent to produce auditable and actionable results.

- Validation Pipelines. Before executing critical actions, the agent checks its outputs against defined validation rules. This step ensures accuracy, reduces operational risk, and provides an additional layer of control.

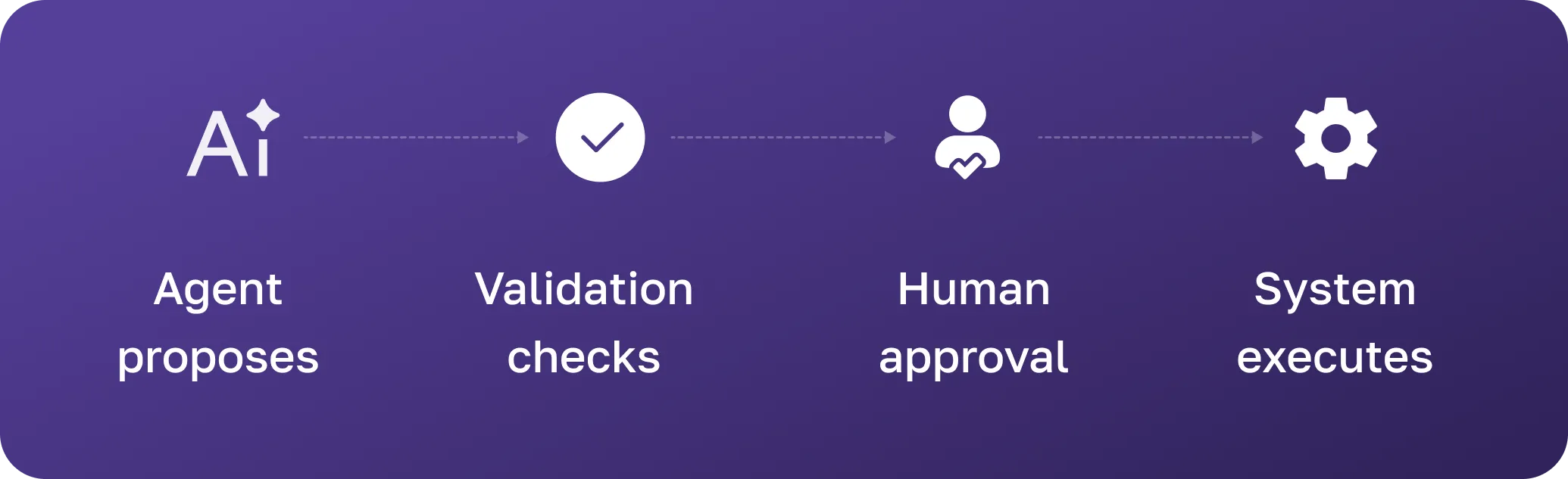

- Approval-Based Action Gates. For high-risk or irreversible operations, human-in-the-loop checkpoints are implemented. Agents may prepare recommendations or pre-fill actions, but approval is required before execution.

- Full Audit Logging Frameworks. Every prompt, intermediate reasoning step, model output, API call, and system action is logged in structured, immutable records. This allows organizations to trace every decision, reconstruct processes, and demonstrate compliance to auditors.

By embedding security into architecture, operations, and workflow design, our enterprise AI agents are not only capable of autonomous action but do so safely, audibly, and reliably, giving organizations confidence that AI can operate as a trusted component of their business infrastructure.

Orchestration & Multi-Agent Systems for Enterprise

As enterprise workflows grow in complexity, single-agent solutions often become insufficient.

Multi-agent systems provide:

- Separation of responsibilities

- Improved explainability

- Fault isolation

- Scalability

Instead of assigning all reasoning to one model, responsibilities are distributed.

Typical Enterprise Pipeline

A common multi-agent architecture includes:

- Planner Agent

Decomposes tasks and determines workflow sequencing. - Domain-Specific Agents

Handle specialized functions such as compliance validation, document parsing, risk scoring, or policy interpretation. - Orchestrator

Coordinates communication between agents, maintains state, resolves conflicts, and aggregates outputs. - Human Oversight Layer

Reviews flagged cases and approves high-risk decisions.

This layered architecture increases reliability and transparency in governance.

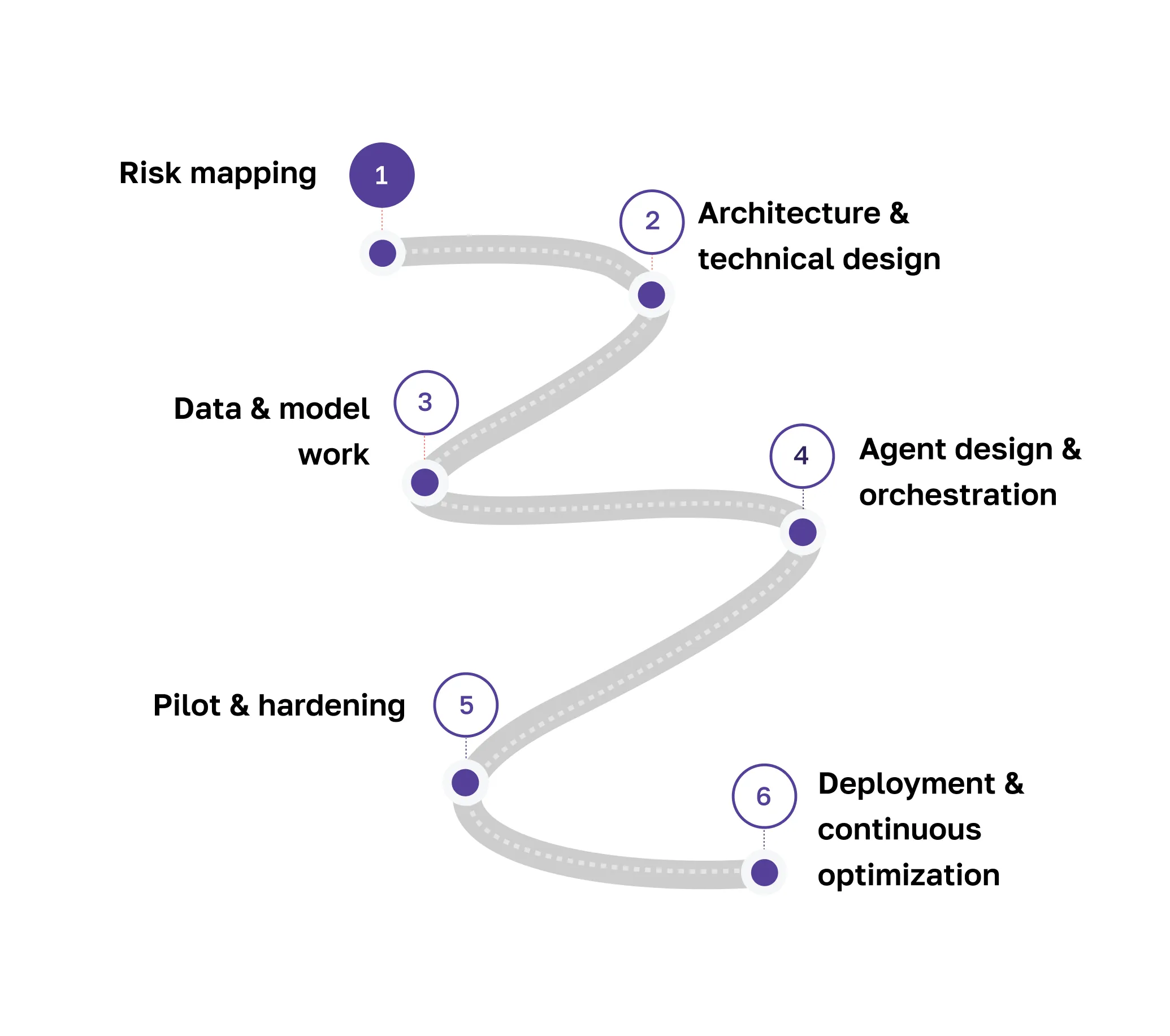

How to Build a Custom Enterprise AI Agent: Our Process

Implementing enterprise AI agents requires a structured, methodical approach. Unlike simple automation scripts or off-the-shelf AI tools, enterprise-grade agents must integrate seamlessly with business processes, comply with regulatory standards, and operate reliably at scale. At Jetruby, our process combines rigorous architecture, security-first design, and practical implementation steps to ensure every agent is robust, auditable, and actionable.

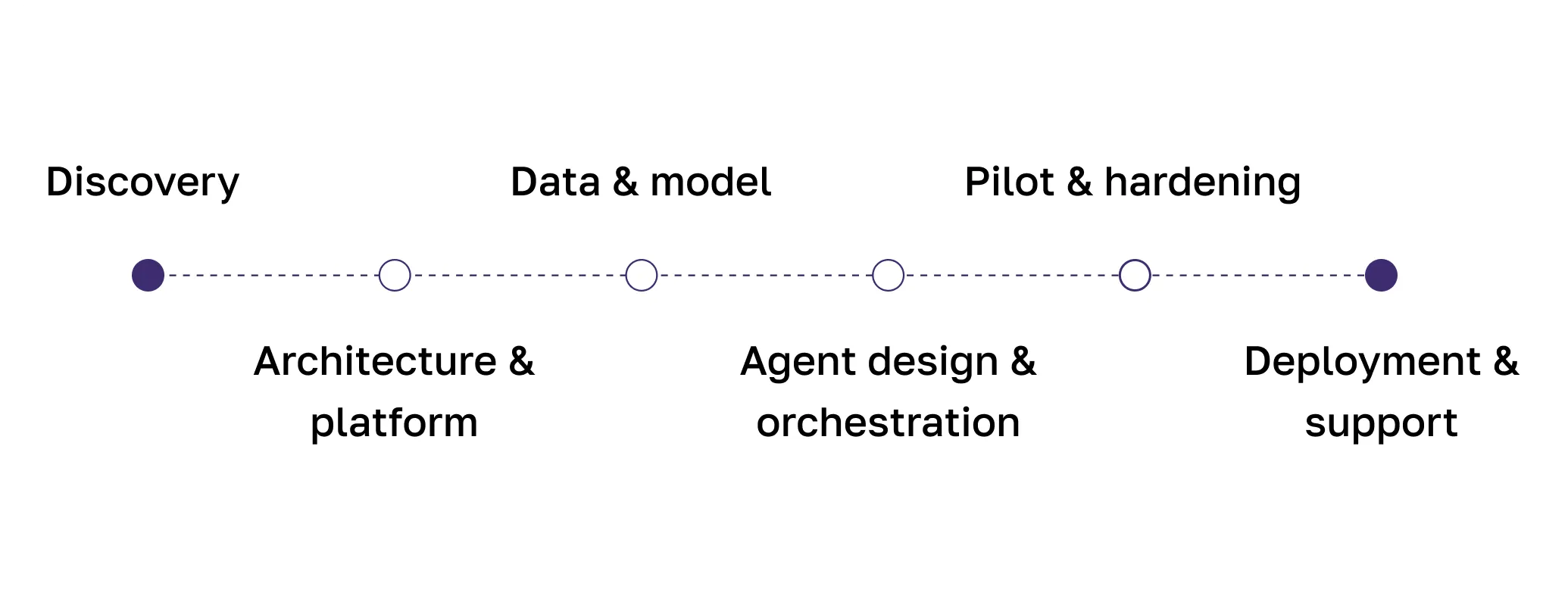

1. Discovery

The foundation of any successful AI agent project is a comprehensive discovery phase. During this stage, we:

- Conduct stakeholder interviews across business, compliance, operations, and IT teams to understand real-world requirements.

- Perform workflow mapping, capturing every step of the existing process and identifying inefficiencies or pain points that can be automated or augmented by AI.

- Execute technical audits of data sources, IT infrastructure, and existing automation tools to identify integration opportunities and constraints.

- Define measurable success metrics, such as reduced processing time, improved error rate, or increased compliance adherence.

- Identify risk boundaries, including data sensitivity, regulatory obligations, and operational impact thresholds.

During discovery, we also quantify automation boundaries. Not every workflow should be fully autonomous from day one. We evaluate decision criticality, financial exposure, regulatory implications, and reputational risk to determine which components can be automated immediately and which require phased autonomy with human-in-the-loop controls. This structured risk calibration prevents over-automation while still delivering measurable efficiency gains early in the implementation process.

The discovery phase ensures that every AI agent we build addresses real business needs rather than theoretical use cases. It also helps align expectations and set realistic project timelines.

2. Architecture & Platform Selection

With discovery insights in hand, we move to designing the enterprise-grade architecture:

- Evaluate deployment options, including cloud, on-premises, or hybrid, based on security and compliance requirements.

- Decide on a model hosting strategy, whether to leverage internal models, third-party LLMs, or a combination of both for domain-specific tasks.

- Define integration patterns for core systems, including ERP, CRM, ticketing systems, and proprietary databases.

- Establish orchestration frameworks and communication protocols for multi-agent workflows.

- Plan security and compliance layers from the ground up, embedding RBAC, encryption, and audit trails into the architecture.

Architectural design at the enterprise level also includes scalability and future-proofing considerations. AI agent systems must support horizontal scaling, model upgrades, version rollback, and extensible integration. We define containerization strategies, API governance standards, and environment segregation policies to ensure that future feature expansion — such as adding new domain-specific agents — does not require architectural rework. Enterprise AI should scale predictably, not accumulate technical debt.

At this stage, every architectural decision is documented in a blueprint that guides the subsequent development, ensuring alignment with enterprise IT standards and long-term maintainability.

3. Data & Model Work

Enterprise AI agents depend heavily on high-quality, secure data. In this phase, we:

- Build secure retrieval pipelines that provide agents access to approved data sources while maintaining strict access controls.

- Curate domain-specific knowledge bases, cleaning and structuring data for optimal AI comprehension.

- Conduct prompt engineering and fine-tuning to ensure that agents understand context, follow business rules, and generate outputs aligned with policy requirements.

- Implement validation frameworks to test the accuracy and reliability of model outputs before they are used in operational workflows.

- Set up data governance protocols for ongoing updates and quality assurance.

This step ensures that agents not only have access to the right information but can interpret it accurately and act upon it safely.

4. Agent Design & Orchestration

At this stage, we move from data preparation to functional design:

- Define agent roles and responsibilities, specifying which tasks each agent will handle and how they interact with other agents.

- Establish decision boundaries, including confidence thresholds and escalation rules for human oversight.

- Design multi-agent orchestration, including sequencing of tasks, conflict resolution, and output aggregation.

- Test sandbox workflows to simulate real operational conditions, validating both performance and adherence to policy constraints.

- Integrate logging and observability layers to ensure every action and decision can be monitored and audited.

At this stage, we formalize decision transparency models. Every agent action must be explainable in business terms, not only technical traces. We design reasoning summaries, confidence-scoring mechanisms, and structured output formats that enable business stakeholders to understand why a specific recommendation or action was produced. This is particularly critical in finance, healthcare, and insurance environments where explainability is directly tied to compliance and audit readiness.

The goal is to build agents that can operate autonomously while remaining fully compliant and auditable.

5. Pilot & Hardening

Before full-scale deployment, we conduct controlled pilots:

- Deploy agents in limited environments to validate functionality, accuracy, and user adoption.

- Conduct security hardening, including penetration testing, adversarial input simulations, and validation of access controls.

- Test workflow integration to ensure agents communicate properly with existing enterprise systems.

- Monitor performance under load conditions to identify bottlenecks or failure points.

- Collect user and stakeholder feedback to refine agent behavior and interaction patterns.

Piloting ensures that the AI agents meet real-world performance and compliance standards before going live.

6. Deployment & Support

Finally, agents are rolled out to production with ongoing support and optimization:

- Implement monitoring dashboards for real-time visibility into agent operations, errors, and performance metrics.

- Maintain logging pipelines for full auditability and regulatory compliance.

- Provide governance documentation, including operational procedures, escalation protocols, and versioning records.

- Schedule continuous updates and optimization, adapting to changes in business processes, regulations, or model improvements.

- Ensure support for scaling, enabling additional agents as business needs grow.

After deployment, optimization becomes an ongoing discipline. We continuously analyze performance metrics, including task completion accuracy, latency, human override frequency, and exception patterns. These signals help identify opportunities to refine prompt strategies, adjust orchestration logic, or retrain domain-specific components. Over time, the AI agent ecosystem matures — shifting from assistive augmentation toward higher levels of controlled autonomy, guided by governance frameworks and measurable ROI benchmarks.

Enterprise AI is not a one-time project. It is an evolving capability that must adapt to organizational growth, regulatory changes, and shifting operational priorities. Our process ensures that every AI agent is not only effective at launch but continues to deliver value and maintain compliance over time.

FAQ

Are enterprise AI agents secure?

Yes — when designed with layered security, strict governance, and continuous monitoring.

Should we start with a platform or custom development?

For simple use cases, platforms may suffice. For regulated, mission-critical workflows, custom architecture is often required.

How long does implementation take?

Pilot deployments may take weeks. A full enterprise rollout may take several months, depending on the complexity.

How do we measure ROI from enterprise AI agents?

ROI is measured through operational efficiency and risk reduction. Key metrics include reduced processing time, lower error rates, faster decision cycles, improved compliance accuracy, and scalability without increasing headcount. In regulated industries, preventing compliance violations alone can justify the investment.

Can enterprise AI agents integrate with legacy systems?

Yes. Enterprise AI agents can integrate with legacy environments through secure APIs, middleware layers, event-driven architecture, or controlled database access. With proper architectural planning, AI systems can coexist with existing infrastructure without requiring full system replacement.

How do we maintain control as AI agents become more autonomous?

Control is maintained through role-based permissions, human-in-the-loop approvals, confidence thresholds, monitoring dashboards, and strict governance policies. Autonomy is increased gradually based on measurable performance and risk tolerance, ensuring AI remains accountable and auditable.

Bottomline

Choosing an enterprise AI partner is ultimately a decision about accountability. AI agents will influence decisions, automate workflows, and interact with sensitive systems. Organizations need a partner who understands not only machine learning models, but enterprise architecture, compliance strategy, integration complexity, and long-term operational ownership. Jetruby positions itself not as a vendor delivering isolated AI features, but as a long-term engineering partner responsible for designing, implementing, and evolving secure multi-agent systems that scale with your business.